Image to Manimation challenge

The first time I let the LLM decide the animation. This time I wanted to test the LLM into animating something I had outlined on a digital whiteboard for an LLM coaching session I had done. I exported the image and let Claude Code loose on it.

It’s not the biggest challenge yet, but in just over 2 hrs and 37 prompts, I was able to get Claude to generate a version that I found acceptable.

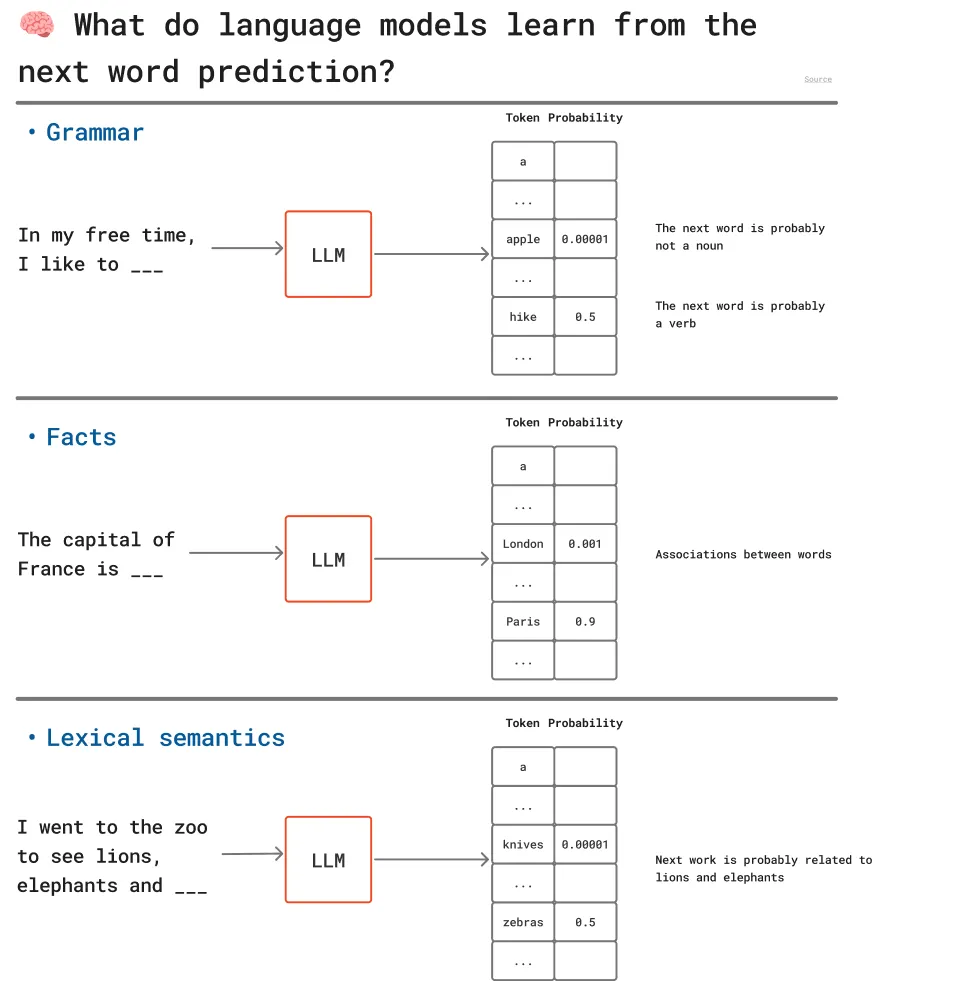

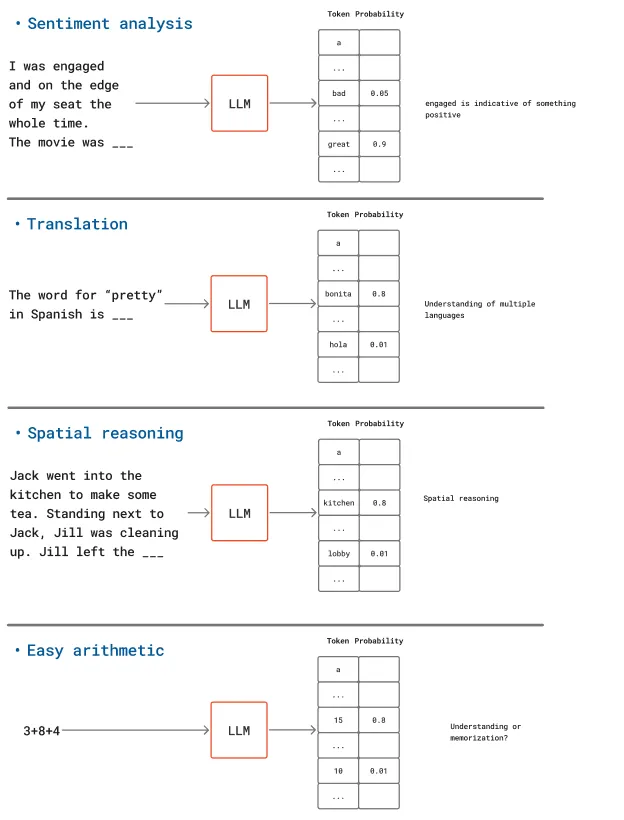

Input

I had to split the image into 2 as Claude Code couldn’t take in a large image.

source: (youtube.com/watch?v=0SuyDLjNR9g)

Inside the process

This time I saved all my prompts with timestamps and let Claude give me some insights into the process & prompts.

<<The text below is generated by Claude.>>

TOTAL ACTIVE WORK BREAKDOWN (Excluding 5+ Hour Gap)

Summary Metrics

- Total active time: 2.01 hours (121 minutes) across 37 prompts

- Average pace: 3.35 minutes between prompts

- Unique prompts: 32 (86.5% unique)

Work Distribution

Initial setup/read ███████████████ 8 (22%)

Design/planning ██ 1 (3%)

Implementation ████ 2 (5%)

Testing/debugging ██████ 3 (8%)

Visual fixes ██████████████████████████████ 16 (43%)

Refactoring ████ 2 (5%)

Documentation 0 (0%)

Repository setup ██ 1 (3%)Key Insights

Visual fixes dominated the project - Nearly half of all prompts (43.2%) were focused on visual refinement: table alignment, fonts, colors, borders, fade effects, and annotation positioning. This suggests either:

- High visual quality standards

- Initial implementation needed significant polish

- Iterative discovery of visual issues through testing

Prompt Characteristics:

- Mostly short, focused prompts (avg 79 chars, 13.6 words)

- High specificity - 32.4% included file references

- 5 duplicate prompts showing iterative refinement

Front-loaded setup phase - 22% of prompts were reading documentation and initialization, mostly concentrated in the early part of Session 1.

Minimal direct implementation - Only 2 prompts (5%) were actual “write the code” commands. Most work was iterative refinement after initial implementation.

Low repetition rate - With 86.5% unique prompts, communication was generally efficient despite some early setup struggles.

Practical workflow - Testing/debugging (8%), refactoring (5%), and repo setup (3%) show a complete software development cycle in under 2 hours.

<<Now back to human generated text>>

Takeaways / Notes

- I had some trouble getting Claude to read the images so there were some duplicate prompts

- Not captured is that I made 3 manual changes in the code. Extremely minor (removing italic style, moving one line above another so animation was in order left to right and I can’t remember the last change)

- Visual fixes dominated the most time - I need a better / faster workflow. I was waiting for the whole scene to render to give it feedback.

- I could have asked it to design / generate one scene first before pulling the rest in, since all the other are the same. We (Claude and I;)) eventually got there.

Github

The prompts and inputs available in a repo.